The ‘changefreq’ is another optional attribute which ‘hints’ at how frequently the page is likely to change. In reality, Google do a very good job of working this out algorithmically.

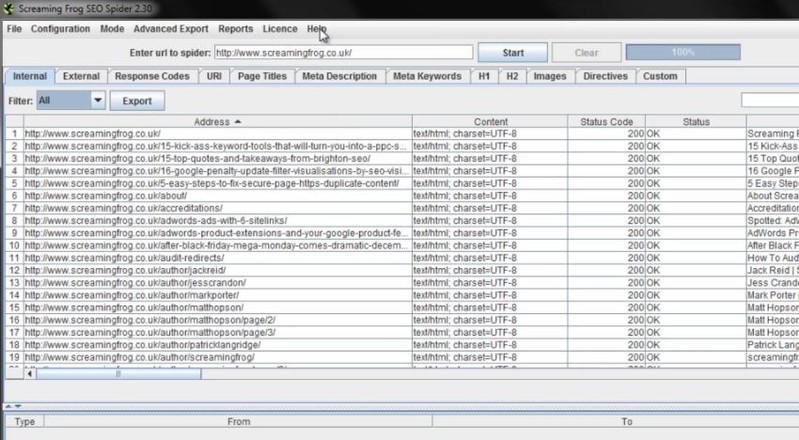

The ‘priority’ is used to increase the likelihood of the most important pages being crawled and indexed. Please remember, the ‘priority’ of URLs, will not influence how they are scored within the search engines. These can be adjusted to your own preference. You can view the ‘level’ of URLs under the ‘level’ column in the ‘Internal’ tab.Īs shown in the screenshot above, by default the homepage (or start page of the crawl) is set to the highest priority of ‘1’, descending by 0.1 in priority by each level of depth down to 0.5 for level 5+. The SEO Spider allows you to configure these based upon ‘level’ (the depth) of the URLs. Valid values range from 0.0 up to the highest priority of 1.0, with the default at 0.5. The priority provides a hint to the search engines of the importance of a URL, relative to other URLs on your site. You can ‘untick’ the ‘include priority tag’ box, if you don’t want to set the priority of URLs. ‘Priority’ is an optional attribute to include in an XML Sitemap. If you wish to include the ‘lastmod’, then simply select whether you’d like to use the ‘last modified’ response provided directly from your server (and seen within the ‘Last Modified’ column in the ‘Internal’ tab) or use a custom date. It’s just a hint to the search engines when the page was last updated. This is a completely optional attribute to include within an XML Sitemap, so you can ‘untick’ the ‘include the lastmod tag’ box if you don’t want to include the date of the last modification of the file. Alternatively you can export the ‘internal’ tab to Excel, filter and delete any URLs that are not required and re-upload the file in list mode, before generating the XML sitemap.If you have already crawled URLs which you don’t want included in the XML Sitemap export, then simply highlight them in the ‘internal tab’ in the top window pane, right click and ‘remove’ them, before creating the XML sitemap.As they won’t be crawled, they won’t be included within the ‘internal’ tab or the XML Sitemap. If there are sections of the website or URL paths that you don’t want to include in the XML Sitemap, you can simply exclude them in the configuration pre-crawl.There’s a few ways to make sure they are not included within the XML Sitemap – You shouldn’t include URLs with session ID’s (you can use the URL rewriting feature to strip these during a crawl), there might be some URLs with lots of parameters that are not needed, or just sections of a website which are unnecessary. If a page can be reached by two different URLs, for example and (and they both resolve with a ‘200’ response), then only a single preferred canonical version should be included in the sitemap. Outside of the above configuration options, there might be additional ‘internal’ HTML 200 response pages that you simply don’t want to include within the XML Sitemap.įor example, you shouldn’t include ‘duplicate’ pages within a sitemap. You can see which URLs are ‘noindex’, ‘canonicalised’ or have a rel=“prev” link element on them under the ‘Directives’ tab and using the filters as well. You can see which URLs have no response, are blocked, or redirect or error under the ‘Responses’ tab and using the respective filters. This can all be adjusted within the XML Sitemap ‘pages’ configuration, so simply select your preference. Pages which are blocked by robots.txt, set as ‘noindex’, have been ‘canonicalised’ (the canonical URL is different to the URL of the page), paginated (URLs with a rel=“prev”) or PDFs are also not included as standard. However, you can select to include them optionally, as in some scenarios you may require them. So you don’t need to worry about redirects (3XX), client side errors (4XX Errors, like broken links) or server errors (5XX) being included in the sitemap. Only HTML pages included in the ‘internal’ tab with a ‘200’ OK response from the crawl will be included in the XML sitemap as default. This will open up a number of sitemap configuration options. When the crawl has reached 100% and finished, click the ‘XML Sitemap’ option under ‘Sitemaps’ in the top level menu. Open up the SEO Spider, type or copy in the website you wish to crawl in the ‘enter url to spider’ box and hit ‘Start’. The next steps to creating a XML Sitemap are as follows –

If you’d like to crawl more than 500 URLs, you can buy an annual licence, which removes the crawl limit and opens up the configuration options. You can download via the buttons in the right hand side bar. To get started, you’ll need to download the SEO spider which is free in lite form, for up to 500 URLs. This tutorial walks you through how you can use the Screaming Frog SEO Spider to generate XML Sitemaps. How To Create An XML Sitemap Using The SEO Spider

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed